I think there is a non-negligible risk of powerful AI systems being an existential or catastrophic threat to humanity. I will refer to this as “AI X-Risk.”

However, it is important to understand the arguments of those you disagree with. In this post, I aim to provide a broad summary of arguments suggesting that the probability of AI X-Risk over the next few decades is low if we continue current approaches to training AI systems.

Before describing counterarguments, here is a brief overview of the AI X-Risk position:

Continuing the current trajectory of AI research and development could result in an extremely capable system that:

Doesn’t care sufficiently about humans

Wants to affect the world

The more powerful a system, the more dangerous minor differences in goals and values are. If a powerful system doesn’t care about something, it will make arbitrary sacrifices to pursue an objective or take a particular action. Encoding everything we care about into an AI poses an unsolved challenge.

As written by Professor Stuart Russell, author of Artificial Intelligence: A Modern Approach:

A system that is optimizing a function of n variables, where the objective depends on a subset of size k<n, will often set the remaining unconstrained variables to extreme values; if one of those unconstrained variables is actually something we care about, the solution found may be highly undesirable.

In some sense, training a powerful AI is like bringing a superintelligent alien species into existence. If you would be scared of aliens orders of magnitude more intelligent than us visiting Earth, you should be scared of very powerful AI.

The following arguments will question one or more aspects of the case above.

Superintelligent AI won’t pursue a goal that results in harm to humans

Proponents of this view argue against the idea that a highly optimized, powerful AI system will likely take actions that disempower or drastically harm humanity. They claim that either the system will not behave as a strong goal-oriented agent or that the goal will be fully compatible with not harming humans.

For example, Yann LeCun, a pioneering figure in the realm of deep learning, has written:

We tend to conflate intelligence with the drive to achieve dominance. This confusion is understandable: During our evolutionary history as (often violent) primates, intelligence was key to social dominance and enabled our reproductive success. And indeed, intelligence is a powerful adaptation, like horns, sharp claws or the ability to fly, which can facilitate survival in many ways. But intelligence per se does not generate the drive for domination, any more than horns do.

It is just the ability to acquire and apply knowledge and skills in pursuit of a goal. Intelligence does not provide the goal itself, merely the means to achieve it. “Natural intelligence”—the intelligence of biological organisms—is an evolutionary adaptation, and like other such adaptations, it emerged under natural selection because it improved survival and propagation of the species. These goals are hardwired as instincts deep in the nervous systems of even the simplest organisms.

But because AI systems did not pass through the crucible of natural selection, they did not need to evolve a survival instinct. In AI, intelligence and survival are decoupled, and so intelligence can serve whatever goals we set for it.

LeCun’s argument implies that an AI is unlikely to execute perilous actions unless it possesses the drive to achieve dominance. Still, undermining or harming humanity could be an unintended side-effect or instrumental goal while the AI pursues another unrelated objective. Achieving most goals becomes easier when one has power and resources; taking power and resources from humans is one way to accomplish this. However, it’s unclear that all goals incentivize disempowering humanity. Furthermore, even if taking over the world and leveraging all its resources is a viable way of achieving a particular goal, a completely different approach could be much simpler and more efficient.

Another valid counterargument may question the likelihood of a powerful AI behaving like a goal-oriented agent in the first place. Under sufficient optimization pressure, the tendency towards goal-directed behavior could emerge in AIs, as exhibiting such behavior correlates with high performance on most AI training objectives. However, this may not be the case for all training objectives. Katja Grace’s article “Counterarguments to the basic AI x-risk case” describes in more detail why an AI would not necessarily be a goal-directed agent with a single goal it relentlessly pursues.

The current deep learning paradigm lacks a necessary ingredient

This argument posits that the current approach to AI training imposes an inherent ceiling on the system's ultimate potential, intelligence, and real-world functionality. This limitation could be linked to the absence of certain ingredients found in the biological evolution of sentient beings. For instance, Yann LeCun has claimed: “Both supervised learning and reinforcement learning are insufficient to emulate the kind of learning we observe in animals and humans” and “there's going to be a more satisfying and possibly better solution that involves systems that do a better job of understanding the way the world works.”

Advocates of this perspective argue that to develop a truly intelligent, capable AI, it must be introduced to a version of the "real world," where it can take actions, gather sensory data, and establish improved causal relationships between actions and world states. They claim that AI systems trained under the current paradigm will be handicapped due to the restrictive nature of their training regimen. In comparison, humans are specifically optimized, via evolution, to manipulate and control the world via aspects of their physical manifestation.

They may also claim that we need more brain-like structures in AI to conduct appropriate computations for accurate world predictions. While this view lacks a rigorous mathematical basis, as many non-brain-like architectures can likely simulate or represent a brain-like machine learning model functionally equivalently, the core argument might be that the imposition of a brain-like architecture during training is much more parameter-efficient. This could accelerate the convergence of the training process on a competent model, impacting AI timelines.

Another potential failing of the current paradigm in yielding highly capable AIs could be the lack of information inherent in biological organisms or the physical world, which is not present in the datasets used for training machine learning systems today. This may underlie the lack of “common sense” and low sample efficiency many skeptics of transformative AI point to.

We will run into resource constraints before we reach superintelligent AI

Various arguments claim that the lack of high-quality data or computational resources may eventually hamper our development of powerful AI systems.

Today, many human workers are employed to curate data or create high-quality prompt completions, aiding in fine-tuning and enhancing AI system outputs. However, as the complexity of the tasks we wish to solve increases, the required data's quality, difficulty, and cost may escalate dramatically.

We may reach a critical juncture where further scientific and technological advancements through AI become very difficult. This bottleneck could occur as we deplete our capacity to draw inferences from the existing corpus of human research and find ourselves needing more high-quality data on areas yet unexplored by humans. This perspective overlooks the possibility of AIs developing the ability to internally simulate physical phenomena or acquiring a deep understanding of fundamental sciences. This might allow them to predict the outcomes of unperformed experiments, thereby contributing to the advancement of human knowledge. However, reaching such a level of robust understanding and intelligence may require computational capabilities far beyond the point of computational practicability or economic feasibility. Thus, the road to this highly advanced AI may be steeper than anticipated.

There are economic disincentives for developing dangerous AI

The article “Why transformative artificial intelligence is really, really hard to achieve” discusses how “the transformative potential of AI is constrained by its hardest problems.” Suppose we can build an AI good at some subset of problems humans need to solve. This may fail to catalyze substantial GDP growth if we encounter bottlenecks in other tasks that AI finds challenging, many of which involve physical interaction with the world. This limitation could undermine the economic incentive for AI advancement, given that large-scale training runs are exceptionally costly. For instance, the training process for an AI model akin to GPT-4 requires an investment of hundreds of millions of dollars.

Quoting from the article:

Imagine that AI speeds up writing but not construction. Productivity increases and the economy grows. However, a think-piece is not a good substitute for a new building. So if the economy still demands what AI does not improve, like construction, those sectors become relatively more valuable and eat into the gains from writing. A 100x boost to writing speed may only lead to a 2x boost to the size of the economy.

AI must transform all essential economic sectors and steps of the innovation process, not just some of them. Otherwise, the chance that we should view AI as similar to past inventions goes up.

Furthermore, if a world event causes a major economic downturn, the incentives to push forward technological progress could wane significantly.

Another economic argument is that organizations have no desire to squander resources on training AI systems that fail to align with their objectives and could potentially cause unintended harm. As a result, there are compelling incentives to thoroughly monitor and scrutinize AI development to guarantee that resultant systems operate according to our intentions. This argument, however, is somewhat undermined by the potential existence of various forms of deceptive AI, which could appear safe in testing environments but act dangerously in novel, out-of-distribution situations. The likelihood of deceptive AI or objective misgeneralization should inform your degree of confidence in the emergence of safe AI. However, as most AI developers are profoundly interested in ensuring their products are beneficial and devoid of harm, this could result in a higher likelihood of safer AI.

We will get nice AI by default

The vast majority of data leveraged in AI training today, such as massive corpora of internet text like Common Crawl, and human-annotated fine-tuning datasets, originates from human activities. This data tends to capture and convey humans' preferences, values, and conceptual frameworks. Therefore, it's not unreasonable to speculate that the resultant AI minds, rather than resembling randomly sampled alien intelligences, will mirror human sensibilities and notions of what is good.

Consider this analogy: a child raised in a household espousing violent fascist ideologies may develop behaviors and attitudes that reflect these harmful beliefs. Conversely, the same child nurtured in a peaceful, loving environment may manifest diametrically opposite characteristics. Similarly, we could expect an AI trained on human data that encapsulates how humans see the world to align with our perspective.

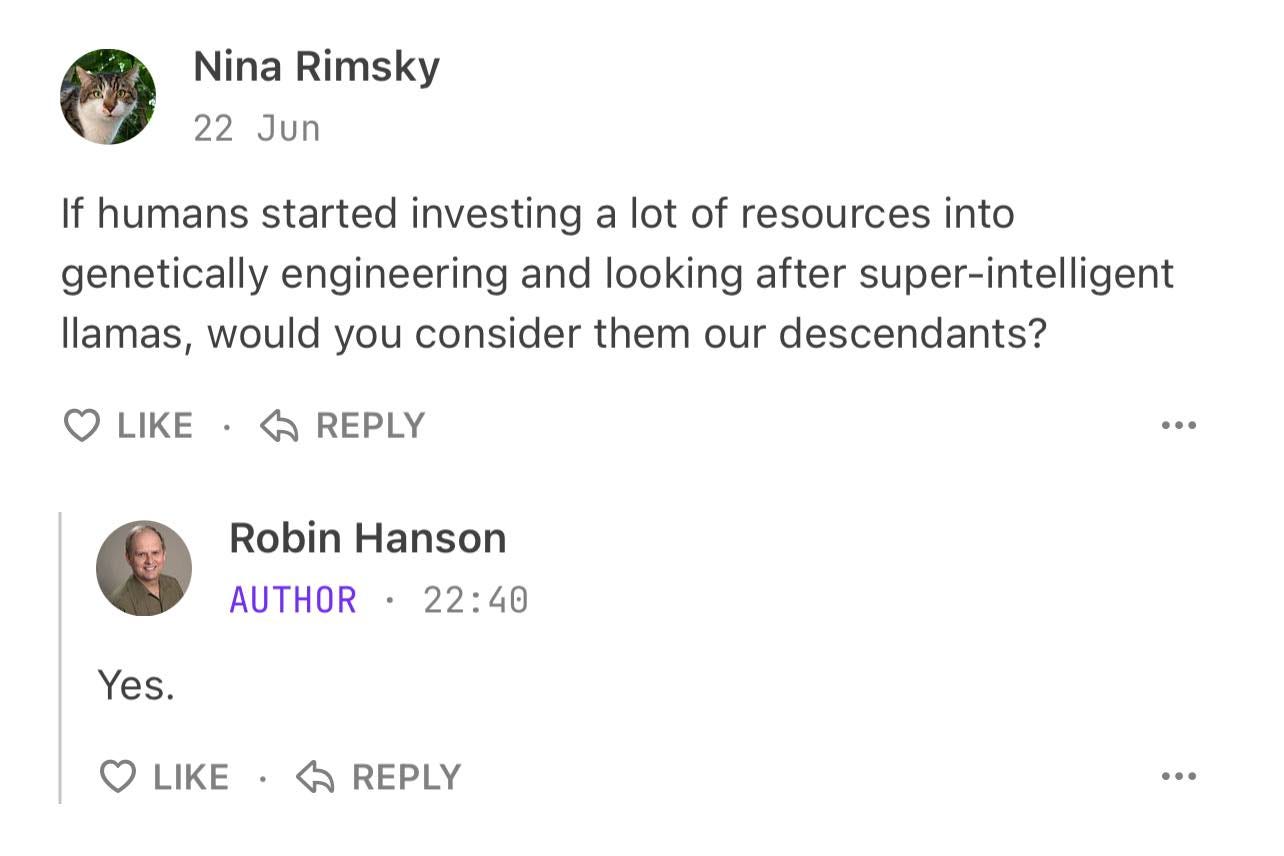

Bonus: AI takeover is good

Economist Robin Hanson sees AIs as “our descendants” and thinks we should allow AI progress to run its course. He postulates that if advanced AI supersedes humanity, such entities would likely present an adequate extension of our species.

Hanson even frames efforts to align AI with human values as ethically questionable:

Hearing the claim that AIs may eventually differ greatly from us, and become very capable, and that this could possibly happen fast, tends to invoke our general fear-of-difference heuristic. Making us afraid of these “others” and wanting to control them somehow, such as via genocide, slavery, lobotomy, or mind-control.

Hanson highlights the incongruity between contemporary human values and those from earlier periods. Yet, we uphold our current standards and would not advocate for our ancestors to have imposed their values on us.

Some direct quotes from Hanson’s article Most AI Fear Is Future Fear:

If long term change has on net been good, counting as progress, we can credit that in part to widespread ignorance of future change. Making moments of clarity like today’s AI vision especially dangerous. The world may well vote to stop this change. And then also the next big one. And so on until progress grinds to a halt.

Most respondents really do seem to be saying they worry far more about unaligned AI than unaligned humans, because they presume such humans must still share far more of what we value. But they really can’t explain much about why.